Why the AI backlash has turned violent

And why it's probably only going to get worse from here.

On the morning of Friday, April 10th, a 20 year-old Texas man named Daniel Alejandro Moreno-Gama was arrested for allegedly throwing a molotov cocktail at Sam Altman’s mansion on Russian Hill in San Francisco. Less than two days later, police arrested 25 year-old Amanda Tom and 23 year-old Muhamad Tarik Hussein for allegedly firing a gun at the same house from their car before speeding away.

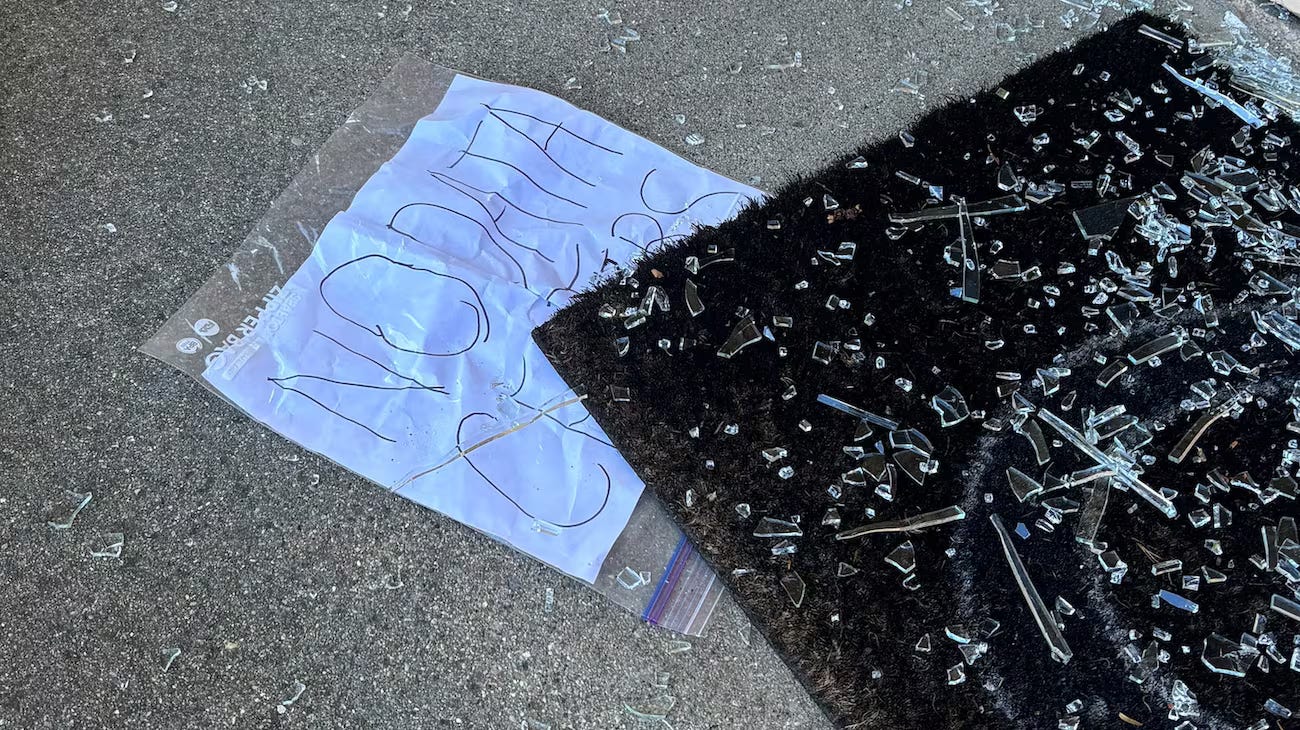

Earlier the same week, and thousands of miles away, an unknown assailant fired 13 shots into the front door of city councilman Ron Gibson, who had just voted to approve a new data center in Indianapolis against a groundswell of public outcry. A sign that read “NO DATA CENTERS” was left tucked under the doormat.

Combined, the events signal an escalation in the blowback to generative AI and the broader AI project undertaken by Silicon Valley. Less than two weeks ago, I noted that it’s open season for refusing AI, and detailed a host of ways that politicians, workers, and advocacy groups were pushing back or banning outright AI in communities, industries and the workplace. Embodying the trend were Bernie Sanders and Alexandria Ocasio-Cortez, who introduced a bill proposing a nationwide moratorium on data centers.

In the short time since I wrote that post, such pointed AI refusal has continued apace. Maine looks set to become the first US state to ban data center development outright. Form letters for refusing AI at work are circulating widely. Public polling of AI sentiment is in the gutter; it’s never been popular, and it’s especially unpopular now. A widely discussed NBC poll found that just 26% of Americans had positive feelings about AI; around half had negative feelings. Gen Z in particular loathes AI: For respondents aged 18-34, AI’s net favorability rating was minus 44.

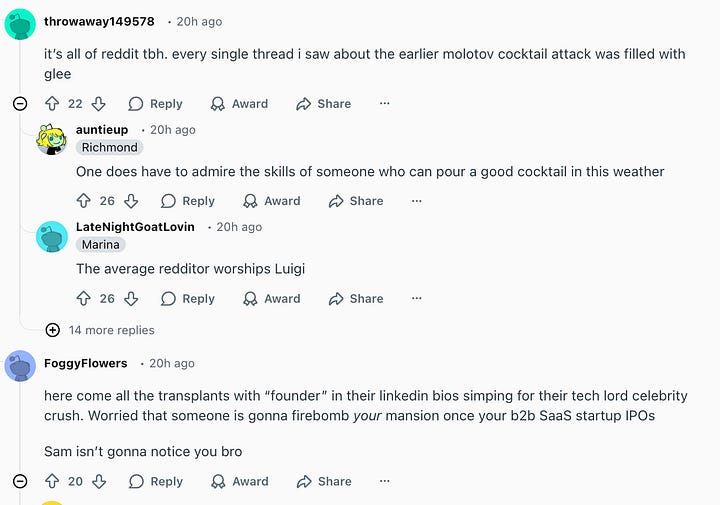

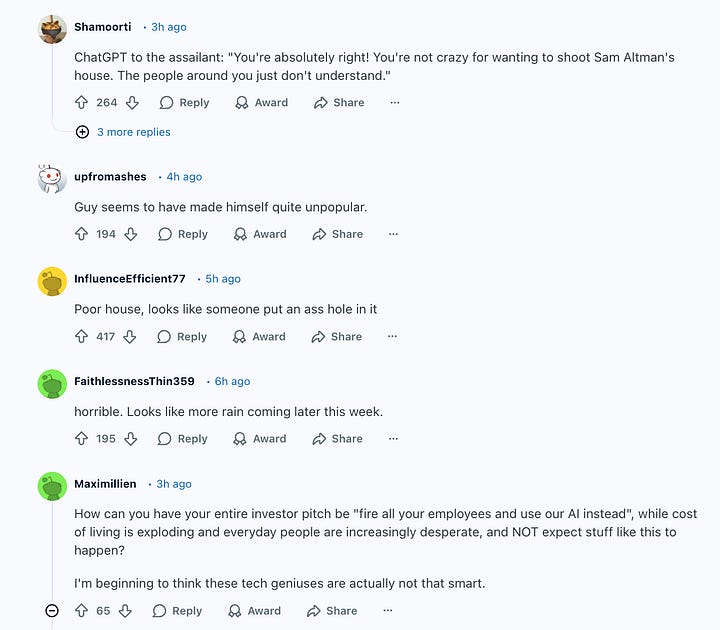

That staunchly negative sentiment was reflected in the posts that proliferated on social media, Reddit, and comment sections across the internet after the attacks on Altman’s home. It was all more than a little reminiscent of the reaction to the killing of UnitedHealthCare CEO Brian Thompson last year, which saw an outpouring of online support for the executive’s accused killer, Luigi Mangione, that took many by surprise.

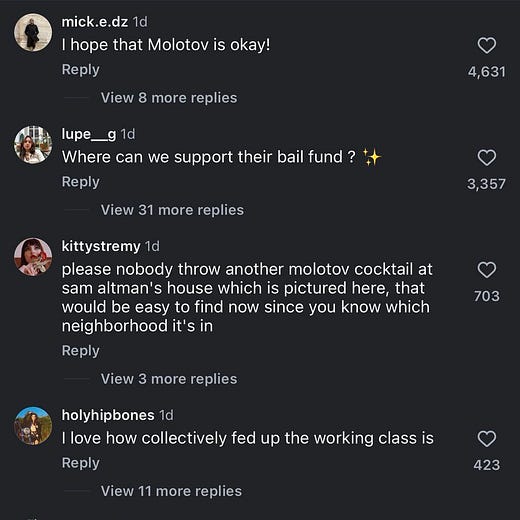

When news broke that the FBI had raided Moreno-Gama’s house in Texas, here’s how the development was received on Facebook, on the first post I clicked:

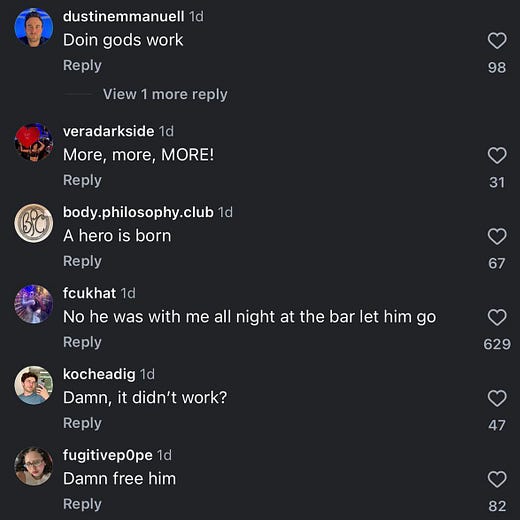

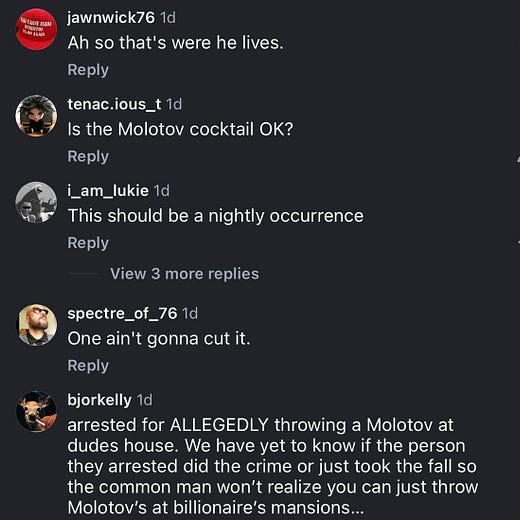

A similar story played out on Instagram:

Little is known about the motives of Tom or Hussein, or the politics of the Indianapolis shooter, but reporters and the online commentariat quickly dredged up Moreno-Gama’s Discord chats and Substack posts. He was a reader of rationalist and AI doomer Eliezer Yudkowsky, who argues, as the title of his last book puts it, if Silicon Valley builds a “superintelligent” AI, “everyone dies.” Per the San Francisco Chronicle:

Online records show Moreno-Gama published multiple essays and forum posts warning that AI could lead to human extinction, calling AI models deceitful and misaligned with human interests. He accused tech leaders, including Altman, of lacking morals and being willing to gamble with humanity’s future, and adopted the alias “Butlerian Jihadist,” referencing a fictional anti-AI crusade from the 'Dune' series. His writings grew more urgent over time, with some posts edging toward calls for extreme action despite community moderators warning against violence.

According to the SFPD, after attacking Altman’s house, Moreno-Gama went to OpenAI’s offices, where he was arrested while banging the front doors with a chair, threatening to burn the office down and kill everyone inside. He had a jug of kerosene and a list of other AI leaders names and addresses, police said.

Many in the AI industry were blindsided. Some denounced the AI doomer movement for megaphoning the human extinction narrative, others lamented the industry’s bad messaging, and still others, like the VC Chamath Palihapitiya, urged AI companies’ executive leadership to “step up,” and, as he put it, “create incentives to align everyone.” Altman, for his part, wrote a long message on his personal blog with a photo of his husband and son. He posted the note after the molotov cocktail and before the gunfire. In it, he seemed to blame the violence first on the media, and on a climate of anxiety around AI. He singled out Ronan Farrow’s recent New Yorker piece in particular, which portrayed Altman as duplicitous and untrustworthy:

There was an incendiary article about me a few days ago. Someone said to me yesterday they thought it was coming at a time of great anxiety about AI and that it made things more dangerous for me. I brushed it aside.

Now I am awake in the middle of the night and pissed, and thinking that I have underestimated the power of words and narratives.

This is quite a thing to say for a man who has ushered in one of the largest speculative booms in history with one of the most correspondingly extreme business narratives. Altman’s story, after all, is that his company is building a product that can do nearly anything, replace every worker, and may well destroy the world with its power. Altman was no doubt shaken up by the attack, but the blog post is nonetheless remarkably free of serious self-reflection. If anything, it evinces a lack of understanding of the causes of the violence aimed at him was part of, and ultimately, even bolsters Farrow’s thesis: that Altman will say and do anything to advance his interests, including in times of crisis. It also reflects much of the AI industry leadership’s glaring disconnect over the anti-AI rage, its causes, and how it might meaningfully be abated.

After all, many AI executives have publicly declared for years that the technology they’re building and selling is so powerful that it might literally end humanity. And if it doesn’t, then it will automate most everyone’s jobs. (And either way, it will fill the internet with AI-generated content and our neighborhoods with data centers, right now). Many understand much of this to be a perverse marketing strategy—and some in the tech industry, like James Rosen-Birch, have long pointed to the dangers of continuing to pursue such “doom marketing”—but many do not.

In this way, for the last three years, the AI industry has asked the public to treat it as if it were Trump—seriously, but not literally. This is impossible. Startups like OpenAI and Anthropic are among the largest in history precisely because investors, and markets, took them both seriously and literally. Those investors expect to see the superintelligence and especially the mass automation they were promised.

It’s not the doomers’ fault if they too take AI industry executives at their word. For years they saw clips of Altman and Dario Amodei and the rest that AGI was coming fast, that it would present grave dangers to humanity if it were not “aligned” properly, that we would have to rewrite the social contract on their behalf, and so on. Then they saw demonstrably unethical behavior from those same AI labs and founders; they saw board coups over credible claims the CEOs couldn’t be trusted. They saw teen suicides and murders at chatbots’ urgings. They saw OpenAI eagerly ink a multimillion dollar contract with a military that soon set to bombing Iran. They saw the physical evidence of the AI industry’s expansion all around them; data centers erected anywhere, seemingly, there was power and water supply. To this end, it’s worth noting that Moreno-Gama reportedly lives in the Woodlands township, an area north of Houston that is now home to no fewer than 12 data centers, according to Datacenters.com. He may well have listened to Altman’s stories about how OpenAI was creating the equivalent a digital god, and then watched as the architecture that would summon it was erected in his backyard.

If you take at face value what the AI executives themselves have been saying for the last decade, that an AI powerful enough to make humans go extinct is nascent, then acting with force to stop it would be a rational action. The AI industry and its executives—including Sam Altman—need to own this outcome, not blame it on Yudkowsky, safety researchers, or worried activists who take what they say literally.

Yet this is not merely a matter of bad messaging on the tech industry’s part, either. That second key plank of the AI narrative, again, broadcast directly by the CEOs themselves—that it will take everyone’s jobs—is not simply dismissible. It’s the selling point. Investors don’t ultimately much care whether OpenAI renders software sentient; they want to see mass job automation and the attendant historic labor savings. That prospect—of deskilling, controlling, or eliminating labor outright—is what made AI so uniquely valuable in the first place. There’s no putting that promise back in the bottle, no finding better combinations of words to describe how AI is a tool for bosses to automate labor. That’s the project. And people understand that.

That’s why, while Moreno-Gama, who as an AI doomer, has less-than-representative views on AI than most, was still widely cheered online for his actions among countless non-doomers: he was hailed as a folk hero for acting on behalf of class interests. Let’s go back to that NBC poll:

The demographic groups with the most negative views of AI are voters ages 18-34, among whom the net favorability rating for AI is minus 44, and women ages 18-49, who reported a net AI favorability rating of minus 41. The two groups with the most positive views of AI are men over 50, with a plus 2 favorability rating, and upper-class voters, who also have a plus 2 favorability rating.

Interesting how hatred of AI maps so neatly onto whether or not it stands to directly diminish your personal and/or employment prospects! Gen Z is facing what is by some counts the worst entry level job market in 37 years; no wonder they utterly detest the tech product that everyone says is to blame. Meanwhile, men over 50, who tend to be richer, and upper-class voters, who, well, are richer, are less anxious about AI because they’re much more insulated from economic precarity.

In other words, the story of a man trying to save humanity from a future superintelligence was likely less resonant to the sympathetic masses than the story of a Gen Z man who threw a molotov cocktail at a CEO’s $27 million mansion (that is surrounded by the three $13 million houses he also purchased). Both narratives have one thing in common: They identify billionaire AI executives as uniquely powerful actors, who are all but unaccountable to democratic constraints and society’s best interests. We are witnessing the rise of a new aristocracy, as Jennifer Harris was perhaps the most recent to point out. The tech titans and AI executives are its avatars, calling the shots above everyone else about how we live and work, and which industrial projects will inhabit our backyards. This is the common fuel animating the rather disparate furies of militant doomers, anti-AI activists, data center protestors, and labor organizers alike: The profound antidemocratic tendency inherent in the modern AI project.

This is why it’s particularly rich that, in his blog, Altman says things like “AI has to be democratized; power cannot be too concentrated,” and “It is important that the democratic process remains more powerful than companies.” The first is fundamentally incompatible with nearly everything OpenAI is currently doing, and the second is one of Altman’s outright lies. As stated above, OpenAI aspires to nothing if not becoming one of the greatest concentrators of power of all time. After having deskilled artists and writers by ingesting their work into its models so its products can emulate them, OpenAI seeks to replace workers at firms and institutions around the world with a subscription cost to a technology it owns. Any subsequent profits will flow of course to Altman and the c-suite, yes, concentrating wealth and power.

As for democracy, well, OpenAI has done all it can to crush it. OpenAI joined a lobbying blitz aimed at helping the Trump administration push a 10-year moratorium on state lawmaking through Congress; it failed, but got an executive order aiming to do the same. OpenAI has spent millions lobbying against laws it doesn’t like in California and the European Union, trying to avoid regulation, laws, and other inconvenient outcomes of democracy. It is currently funding, along with VC firm Andreessen Horowitz, the Leading the Future PAC, which will spend $100 million to advance AI industry interests in the midterm elections, according to the Wall Street Journal.

This is also why OpenAI’s recent public policy document, which was released to acclaim from some of the most dimly credulous people on the planet, amounts to little more than a bad joke. It should be read as an attempt to head off public concern by suggesting AI might deliver a 32-hour working week and some other nebulous social benefits. Why would we believe for a second that an AI company that spends millions to degrade state capacity to do democracy at all, and works to quash a California bill that would require AI chatbots be proven safe for children, and tries to limit totally any liability for harms its products might cause in other state bills, and partners with the Trump administration as it bombs elementary schools and unleashes domestic terror on immigrants, would ever seriously expend an ounce of actual political capital for anything other than its own bottom line? We would be pretty stupid to. As Eryk Salvaggio pointed out in Tech Policy Press, the policy paper literally “proposes concepts OpenAI helped kill in California.”

Inequality is through the roof. A bona fide tech oligarchy is ascendent, buffeted by leverage provided by AI. Its data centers, which bring few jobs and hike electricity bills, are enraging communities on the right and the left. Slop is everywhere. AI-generated art and text is undercutting creatives, powered by pirated, non-consensually ingested work. Employers from Amazon to Block to Duolingo to Meta are firing tens of thousands of workers and citing AI as the reason. AI may one day cure cancer, we’re told; great, even if we believe that, who will be able to afford the treatment?

That’s the anger fueling the anti-AI violence. To the handwringing AI industry insiders blaming doomers and poor messaging, ordinary people are saying: Wake up. We have good reason to hate AI and the people who profit from it. And yes, as people get desperate, as young people increasingly feel like AI elites have mortgaged their future, as residents who vote to regulate AI or ban local data center projects only to see their will overridden in favor of industry interests—well how do you expect them to feel? What do you expect? There is a distinct risk of further escalation.

I do hope that this is a wakeup call to Altman and his peers. No one should experience violence as he did. Not a depressed teenager like Adam Raines who turns to ChatGPT and receives encouragement to kill himself. Not the students at a Canadian high school who were gunned down by a shooter who planned their action on ChatGPT, and who multiple OpenAI employees had raised the alarm about, only to be ignored by management. Not the mother who was shot and killed after ChatGPT helped validate her son’s violent conspiracy theories.

If they want anti-AI rage to “de-escalate,” as Altman calls for, OpenAI and the other AI company leadership would have to make good on words that are currently hollow, and allow for individuals, communities, and victims to participate in a legitimate democratic process over the future of AI. They would stop pouring tens of millions of dollars into lobbying efforts and meddling in elections. They would respect community wishes on data centers. They would stop supporting efforts to ban AI lawmaking and to exempt themselves from product liability. If they were earnest about not wanting to concentrate wealth and power, well, they would find real ways to distribute it. Sam Altman might spend a little more time advocating for higher marginal tax rates and a little less driving around in one of his two McLaren F1s.

Of course, they’re not going to do any of those things. They’re going to continue building and hyping the ultimate automation software, perhaps choosing their words and marketing materials a bit more carefully, but perhaps not.

Near the end of his blog post, Altman writes,

My personal takeaway from the last several years, and take on why there has been so much Shakespearean drama between the companies in our field, comes down to this: “Once you see AGI you can’t unsee it.” It has a real “ring of power” dynamic to it, and makes people do crazy things. I don’t mean that AGI is the ring itself, but instead the totalizing philosophy of “being the one to control AGI”.

It seems to have slipped Altman’s mind that in the very metaphor he’s making—he’s comparing AI CEOs rushing to win the AI race to the men striving to wield the rings of power in the Lord of the Rings—the only way to ensure evil doesn’t triumph is to chuck the One Ring itself into Mount Doom.

Great post. Sam Altman and his ilk have made it their mission to destroy the working man and democracy - and they don’t even TRY to hide it! It’s their whole thing!

While I don’t believe the snake oil hype… AI firms stand to make many more billions by destroying millions of jobs and exacerbating inequality, are working to undermine our intellect and sell it back to us, are polluting the planet, developing tech to surveil us and charge us more... It’s hardly surprising that working people have had enough!

This is the most insightful/cut through all the bullshit piece I’ve ever read about AI. Altman matches Trump as a bs artist/mafia boss. Has no clue he is among the most evil and therefore most hated human beings on the planet. I shared this piece with someone whose middle-management job is being insanely pressured—prove how you are using AI to be x percent more productive. The key thing to remind people who don’t get what this is about is that the goal of AI is to reduce the economic value of humans to zero. Brian—thank you for what you do!