AI firms and their US military ties, "a whole civilization will die tonight" edition

Plus, Anthropic's new AI model that's 'too dangerous to release,' thoughts on the New Yorker's Sam Altman investigation, and more.

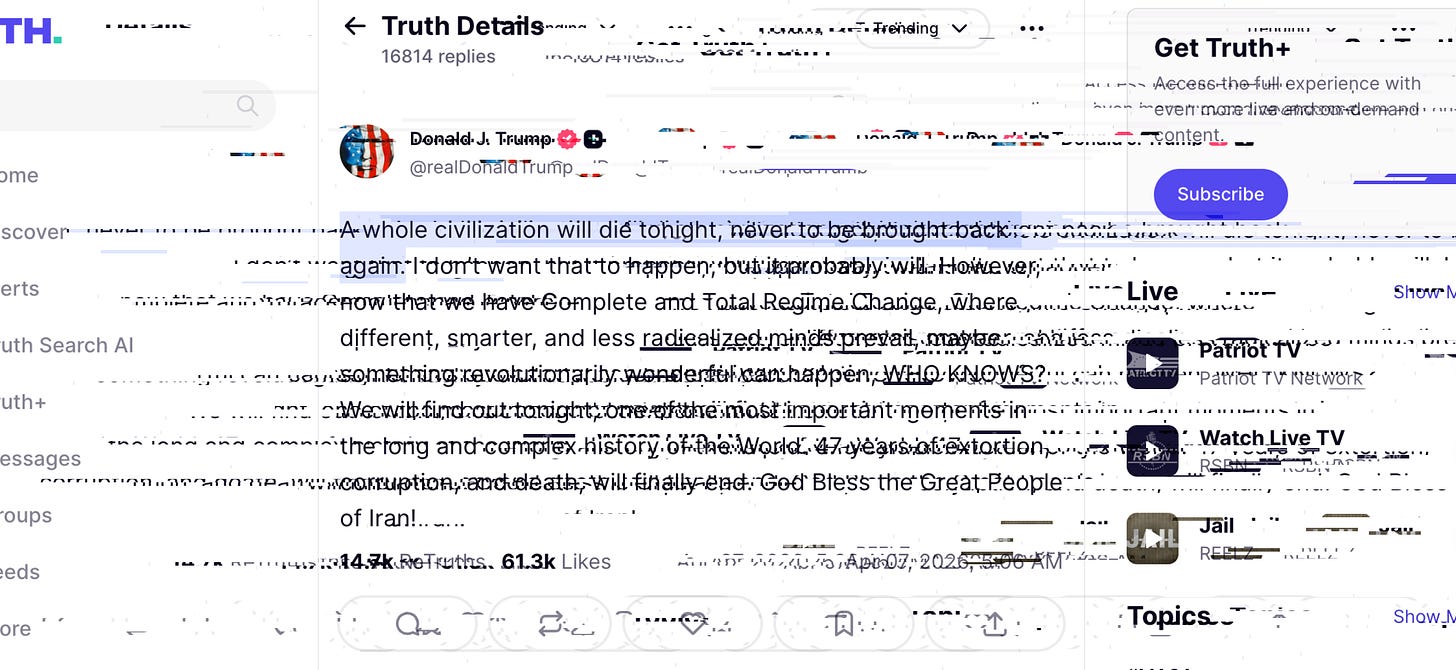

Everything about the Iran War has been horrific, but Trump’s now-infamous threat, issued on Truth Social, that “a whole civilization will die tonight, never to be brought back again,” signaled a distinct escalation. A threat of genocide, issued by a sitting US president, will do that.

That threat did not materialize, and now some apologists are saying that it was just one of Trump’s deranged bargaining tactics, as if that excuses such categorical declarations of mass violence from a US president. Most people, it turns out, are not OK with that; the commentariat was almost universally appalled, a lengthy list of Democrats have called for Trump’s removal from office on grounds of the 25th Amendment, and even some of his staunchest allies on the right—Tucker Carlson, Megyn Kelly, Alex Jones—were not just breaking ranks but angrily calling him out.

Yet there was one group that was (and remains) uniformly silent as Trump threatened to use the military, and presumably its store of nuclear weapons, to enact genocide: the leadership of the tech industry, which in recent months has inked numerous lucrative deals with that very same military. Currently, OpenAI, Microsoft, Google, Amazon, xAI, Oracle and even Meta have large contracts with the US military.

None of the leadership from any of those companies has expressed any discomfort with Trump’s genocidal threatmaking, let alone the entire war of aggression against Iran. It’s worth sitting with this for a moment, I think; the fact that Alex Jones has expressed more moral concern over a US president’s calls to kill an entire civilization than any major tech executive. (Any tech executive that I have seen, anyway, and I’ve spent the last couple of days looking.) It is occasion to examine the extent to which Silicon Valley has become an enabling partner in Trump’s military adventuring.

Now, the foundational story has been more or less the same since Silicon Valley began its embrace of Trump in earnest last year: The tech giants and largest AI firms made public shows of fealty, embedded themselves in the administration’s broader project and explicitly aligned their interests with Trump’s, in exchange for access to the state’s largesse, deregulatory agenda, and multifaceted support.

But this week should serve as a clarifying moment. The sitting American president explicitly promised a genocide then forced the Iranian people to wait for hours to see if they would be bombed “into the stone age” and the rest of the world to see if he would start World War III in earnest this time. Even a few years ago, Silicon Valley executives might have spoken out against such horrific declarations; intents to, in part, harness their AI, tools and technological infrastructure to such abject ends. Now they are silent; content, apparently, as long as Trump continues to give them favorable land use policy for their data centers and state AI law moratoria. If an industry that rose to cultural dominance by promising to be harbingers of progress—to improve people’s lives, to do no evil, to bring people together, to make a dent in the universe, and by using all of the above as a powerful recruitment tool—cannot draw the line here, then what’s left?

So, today, I want to drill into the myriad ways some of the top AI and tech firms—OpenAI, Google, Microsoft, Amazon, and Anthropic—are profiting directly from the US Department of War and their close ties to Trump. Even if all of the tech firms listed above are not explicitly building the technical infrastructure that enables mass killing—though some are—they have become pivotal to Trump’s geopolitical project nonetheless. They have provided his administration financial support, credibility, and lent his project a veneer of forward-looking futurity, even as that project delivers regression, oppression, and violence on the ground. They have provided cloud and AI services to the military and affiliated government agencies. They are war profiteers.

The executives of these companies—the Sam Altmans, Elon Musks, and Sundar Picchais who travel to Mar-a-Lago, pose for photo opportunities with the president, and help funnel cash donations his way—may be lost causes, intent as they are on milking the president’s pro-AI posture for all that it’s worth, civilizational ending military decrees or not. But workers, who make these companies possible, are another story.

There was a time not long ago when the mere prospect of Google entering into a contract with the Department of Defense prompted mass walkouts and resistance—the largest worker action the tech industry has ever seen, by some counts. Mass firings, retribution for organizing, and an incursion of rightwing politics into tech have helped stymie or slow subsequent efforts, but some continue undeterred. And there are signs that discontent is brewing again. There are reasons to believe, as always, that if workers discover their power, they might transform even the richest, most hyped, and most powerful companies in the world.

So! Below, find a crash course in the top AI and tech firms’ military ties. After the fold, we’ll dive into the New Yorker’s long report on Sam Altman and OpenAI, Anthropic’s much-publicized release of Mythos, the AI model that’s “too dangerous to release,” and more. As always, I rely on paying subscribers to make this work possible; if you find value in it, and you can, please consider upgrading to a paid subscription. To those who already do, many, many thanks. OK! Onwards.

OpenAI

OpenAI most recently and perhaps most famously rushed to cut a deal with the the Department of War after Anthropic refused to submit to demands that it allow its technology be used to surveil Americans and in autonomous weapons. The deal is estimated to be worth “between $500 million and $2 billion over five years, based on comparable classified AI deployment agreements,” according to Tech Insider, though the specific dollar amount has not been made public.

(It’s also worth adding that thanks to reporting from Ronan Farrow in the New Yorker, we know that the reason Anthropic had this contract in the first place and OpenAI did not was because Sam Altman had been deemed untrustworthy by the previous administration and to be potentially pursuing compromising interests with his development deals for data center projects in the Middle East.)

Last year, OpenAI inked a $200 million deal with the Department of War as part of its OpenAI for Government initiative, to help it “identify and prototype how frontier AI can transform its administrative operations, from improving how service members and their families get health care, to streamlining how they look at program and acquisition data, to supporting proactive cyber defense.”

OpenAI has also offered enterprise ChatGPT services to government agencies at a loss for $1 a year. Its president, Greg Brockman, donated $25 million to the MAGA Super PAC. OpenAI partnered with the United Arab Emirates and the Trump administration (and Softbank and Oracle and others) to announce Stargate, a data center conglomerate with installations in places like Texas and Abu Dhabi (which, incidentally, Iran recently threatened to attack) that claims it will ultimately invest $500 billion.

Of the AI firms that are not explicitly oriented around defense tech, like Palantir and Anduril, or run by an executive who is openly committed to rightwing politics, like Elon Musk’s X, OpenAI is the most ardent supporter of Trump’s project in general, and now, after Anthropic’s ouster, the most likely to be involved in providing information for combat operations.

While there have been flickers of discomfort among workers and prominent employees around this alliance, such as OpenAI’s chief futurist speaking out against Trump’s threat, it's mostly been quiet.

If you’re an employee at OpenAI, and you agree with Achiam, one place to start proving that democracy can overcome those threats and reject such practices is in your own workplace. (I reached out to Achiam through his post, for what it’s worth, asking about the prospect of workplace organizing; I’ll update if I hear back.)

Google

Last year, Google was awarded a $200 million contract to, in its words, “accelerate AI and cloud capabilities across Department of Defense’s Chief Digital and Artificial Intelligence Office.”

Along with Oracle, Microsoft, and Amazon, Google won a contract with a $9 billion ceiling to provide Joint Warfighting Cloud Capability:

Through the contract, which has a $9 billion ceiling, the Pentagon aims to bring enterprisewide cloud computing capabilities to the Defense Department across all domains and classification levels, with the four companies competing for individual task orders.

NextGov also reports that Google has a major deal with the US Navy:

Google Public Sector was awarded the U.S. Navy’s ONENET contract in October to “provide highly specialized cloud services” for the U.S. Indo-Pacific Command. Google Public Sector does not publicly disclose revenue figures, but Google Cloud and its public sector cloud business have both experienced year-over-year revenue growth since 2022, with Google Cloud capturing $12.3 billion across all its business in the first quarter of 2025.

“I am feeling super confident in our ability to work with [the Trump] administration to show them that Google is all in,” the Google executive Karen Dahut is quoted as saying. “This is the most disruptive, and I mean that in a really positive way, because they are looking at this as an opportunity to bring real disruption to the way that government operates,” she said. “To drive efficiency, to drive cost effectiveness, to bring new emerging technology to bear to government, and to really make a difference for enterprise operations or mission.”

Google also famously inked a $1.2 billion joint deal with Amazon for Project Nimbus, to provide cloud compute and AI services to the Israeli government and military. Employee groups like No Tech for Apartheid have been protesting that contract for years now; some have been fired for staging sit ins at the Google office. The same organizers and others are also protesting Google’s ties to ICE and the Department of Homeland Security, and though some have signed on in support of the effort, there’s been nothing approaching the actions of 2018, when thousands of employees stood up and walked out to spurn Project Maven.

There’s still some dissident spark among workers at Google, difficult as the harsh response from management to organizing and the current job climate make staging meaningful protest. Try as it might, Google hasn’t been able to crush it. It remains to be seen whether, and how, those remaining efforts might expand.

Microsoft

Microsoft is probably the most prominent commercial tech firm with significant government contracts—notably, it landed a $22 billion contract to develop the U.S. Army's Integrated Visual Augmentation System (IVAS). That didn’t pan out so well though, and after development delays and reports of sick soldiers, the contract was spun into a partnership with Anduril.

Microsoft also had a $533 million enterprise service contract with the US Navy, that has since expanded. Its Azure OpenAI platform has been authorized “for U.S. Department of Defense workloads at Impact Level 6 (IL6)” aka the highest classified levels. “With this announcement, Azure OpenAI Service is now authorized for workloads at all U.S. Government data classification levels.”

Just last January, Microsoft, along with Google and Amazon, won an $800 million contract with the US Air Force—its slice was worth $170 million.

Microsoft is also supporting Scale AI in its Thunderforge project, the first effort to bring AI agents to the Department of War/Defense, in a deal worth an undisclosed billions of dollars:

Thunderforge marks the DoD’s first foray into integrating AI agents in and across military workflows to provide advanced decision-making support systems for military leaders.

Our team of global technology partners – including Anduril and Microsoft– will develop and deploy AI-powered solutions and custom agentic workflows (always under human oversight) to our mission partners initially at Indo-Pacific Command (INDOPACOM) and European Command (EUCOM). Anduril is integrating Scale AI’s LLM capability into its Lattice advanced modeling and simulation infrastructure to enhance mission planning. Microsoft is providing state of the art LLM technology to enable a leading edge, multimodal solution.

Microsoft is perhaps the most expansive tech defense contractor for the federal government (it’s also the oldest and largest supplier of digital infrastructure to government agencies in general) and its legacy status means its leadership doesn’t have to kiss Trump’s ring quite as obviously or obsequiously as OpenAI’s does. Microsoft is all but part of the blob now; a dull, apparently permanent fixture of the military industrial complex like Northrup Grumman or Raytheon/RTX. That does not mean it cannot or should not be protested, or that its leadership might be given a pass.

Amazon

Amazon is party to the above-mentioned JWCC contract, its own cloud compute deals, and the Anthropic-Palantir partnership; in addition to that, I recently wrote in-depth about Amazon’s recent enablement of authoritarianism:

Anthropic

Yes, Anthropic should be given credit for refusing to continue its contract with the military after the Department of War demanded it amend provisions to allow its AI to be incorporated into state surveillance and autonomous weaponry. The move may have served Anthropic’s reputation as the more ethical, safety-minded AI company (or perhaps even been crucial to maintaining its credibility at all) but it was ultimately the more moral one to make—and served to well illustrate OpenAI’s relative unscrupulousness as the rival rushed to fill the void.

Still, Anthropic doesn’t exactly have clean hands here, either. After all, it signed the $200 million contract with the Trump Administration’s DoD in the first place. A year before that, it signed a different one, in partnership with Palantir and Amazon. Before Iran, Claude was notably reportedly used in the campaign to kidnap the Venezuelan president Nicholas Maduro.

But the example the company’s break with the Department of War sets is a crucial one: That AI companies can bow to public pressure under the right circumstances. Anthropic’s workers—and their consumers—expect a degree of moral behavior from the company, and can thus be pressured to back out of enabling the worst tendencies of the war machine.

The question becomes: how do we create the conditions for similar changes in these other companies? Antitrust action would help curb their size and power, but the prospect seems far-off, consumer boycotts can make for some embarrassing headlines, and public shaming of executives can make for some prodding in the right direction. It should, after all, but utterly toxic for any of these companies to contribute in any way to a president that promises genocide, to a war that was entirely unprovoked, and that immediately led to the death by American bomb of over a hundred little girls.

Despite the relative quiet, the best hope for structural change may be old-fashioned worker organizing. (In part due to a lack of many other meaningful avenues.) It’s not an easy choice to step up and speak out, to refuse to work on projects for a military carrying out such actions, a president willing to go to such extremes—but it’s clearly the moral one. Trump may or may not have “meant” his threat to destroy an entire civilization; what’s clear is that we stand on a precipice, with few actors capable of influencing his increasingly addled decision-making, his poster’s trigger finger. The Valley is home to some of those few actors who can.

On that big Ronan Farrow New Yorker cover story about Sam Altman and OpenAI

I eagerly tore into the New Yorker cover story; Ronan Farrow’s reportedly year-and-a-half-long investigation into Sam Altman with fellow staff writer with Andrew Marantz, and, well, I have to say…