AI's aesthetics of failure

On the demise of Sora and our enduring revulsion to AI slop

One of the great ironies of the AI age, such as it is, is that it wound up looking like shit. When Artificial Intelligence finally arrived, with all of its fearsome technological sophistication, it was presumed that it would at least look cool as it surveilled, subverted, or enslaved us. Instead, even the biggest boosters of AI have been forced to disavow their technology’s chief aesthetic sensibility. “I don’t love slop myself,” Nvidia CEO Jensen Huang said in regards to a scandal over his compay’s AI giving video game characters an unwanted makeover (perhaps notably, in the same week he declared that AGI has already been “achieved.”).

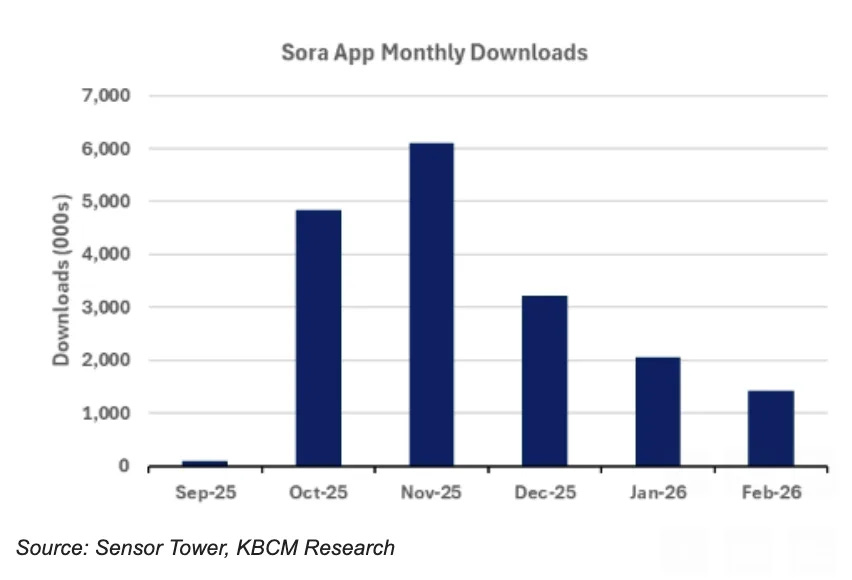

Revulsion at slop aesthetics certainly played a role in the collapse of Sora, OpenAI’s much-hyped and less-used video generation app; the company announced it was shuttering the operation this week amid a pointedly publicized effort to “nail” its core business and to not “get distracted by side quests.” (The $1 billion deal OpenAI cut with Disney is also dead.) Users simply did not seem to like Sora. The app, which was estimated as costing the company as much as $15 million a day, saw both downloads and monthly usage taper off rapidly after just two months of growth.

This newsletter takes many hours to research, report out, and write a week. It’s only possible thanks to paid subscribers to chip in to support it. (A large thanks to all of you.) If you find value in this stuff, and you’re able to, please consider upgrading to a paid subscription. Thanks, and hammers up.

After peaking at 6 million monthly downloads in November, Sora fell to around a million and a half last month.

Monthly average users had already begun to decline as well. Originally pitched as a bona fide TikTok competitor and the revolutionizer of Hollywood in a single package, Sora turned out to be more OpenAI spaghetti thrown at the wall, an impressive tech demo dressed up into a product, rushed to market and breathlessly hyperbolized.

There’s a case to be made that the company was never all that serious about turning Sora into a successful service, and that it’s best considering the app as one of OpenAI’s myriad instruments for keeping the hype cycle fed and the media hooked and the investment dollars flowing. (Given the economics and its intense compute demands, if Sora had blown up, it’s hard to see how it ever turns them a profit.) Even if that’s true, it’s still very much worth considering why Sora tanked.

Theoretically, offering users the ability to appropriate, remix, or outright plagiarize a nearly limitless well of pop culture IP and to mess with their friends by dropping them and their digitally scanned visages into any kind of scenario, seems like an idea that could have legs. Yet it was cursed from the start. To begin with, Sora was just overwhelmingly unpleasant to look at and to use. I have an account—it is my solemn reportorial duty—and from time to time I’d log on to check in on the platform. Most times I did, it seemed that people were using Sora to push the boundaries of the platform’s own conspicuous tastelessness, mining that queasiness inherent in the Sora aesthetic. There was a kind of submeme where people gruesomely but not realistically peeled their faces off to reveal they were other people. There were a bunch of posts of giant women stepping on men and crushing them. Judge Judy arbitrating a court case between Obama and Trump. People driving their cars into mountains of human shit. That kind of thing.

It turns out that there was a limit to user interest in half-baked, glitched-out pop culture mashups or videos of anthropomorphized fruit having sex or AI CEOs hilariously placed in compromising situations or whatever.

It just wasn’t fun. The jokes felt almost incapable of landing unless they were folded directly into the narrow currents of cheap Adult Swim surreality that defined Sora’s vibe. The videos looked anywhere from dull and derivative to sickly and weird to unsettling and nightmarish. I regret not taking a screenshot but I swear at one point that OpenAI served me a pop-up survey question that asked something like “how does using Sora make you feel?” indicating the company was worried about the mental health of anyone who would spend more than a few swipes on the app. TechCrunch—TechCrunch—called Sora “the creepiest app on your phone.”

As such Sora seems to have been used mostly by people who wanted to whip up a slop joke or slop commentary to share on another platform. Who can blame them. Why would anyone want to spend any longer than they had to in the corridors of a pulsing, feel-bad uncanny valley? Sora’s were the halls of pure slop, and not even AI CEOs themselves can stomach that slop.

Now, it’s often argued that AI cannot create anything truly new, that even the most sophisticated LLMs are fundamentally token-prediction systems, and thus the pixels its image-generators rearrange are necessarily an amalgam of shapes and styles all seen before. That AI image generators are intensively derivative, and that Sora was all but exclusively so, is undeniably true. And that I think is the root of its failure. LLMs strive to reproduce reality, or beloved aesthetics of the past, or even generally pleasing imagery, and they almost always fail. This failure is immediately apparent to us, for the same reasons that animate our discomfort with imagery in the uncanny valley in general, as well as some reasons beyond that.

This failure is not limited to or even primarily concerning image quality. As the generators have improved in ironing out past telltales like the extra fingers and such (in prepping for this post I looked back at the original Sora videos and it was shocking to me how bad they were), our queasiness hasn’t subsided. AI image and video slop is not just homogenous, and it’s not just derivative. Slop is a visual embodiment of the modern AI project itself; an in-progress effort to replicate, undermine, and replace human works. It’s fundamentally unsettling. (This one reason that, as Gareth Watkins argued, AI is ideal for creating a new aesthetics of fascism.)

That’s one slapdash theory anyway, and one explanation for why, to this day, years into the AI boom, after so many billions in investment and numerous model improvements, whenever we encounter AI-generated imagery, we still tend to either recoil or roll our eyes. Why, while AI imagery was originally a very useful demo of tech capabilities for founders and execs, now they seem to wish it would go away. Slop is a pervasive reminder of both AI products’ persistent qualitative shortcomings and the noxious intent of the products themselves.

It’s also a reminder of just how little regard Silicon Valley generally seems to have for aesthetics in general anymore. I think it’s fitting that the same week that OpenAI announced the imminent shutdown Sora, its splashiest showcase for AI, Meta announced the imminent shutdown of Horizon Worlds, its splashiest showcase for the metaverse. And if there was ever a technology that looked like shit, whose aesthetics screamed failure, well:

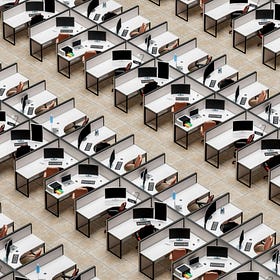

AI often gets compared to the metaverse, in the context of allegations that it’s Silicon Valley’s latest grift after that spectacular, much-hyped failure etc. But AI garners fewer comparisons to the metaverse as a project with similar qualitative dimensions, and similar objectives. Recall, Meta launched its metaverse pursuits (replete with company name-changing gravitas/bravura/etc) with digital entertainment meant to simulate the real world (Worlds) and a productivity program (Workrooms). The metaverse was also supposed to replace and streamline bodied work, and offer users a digital facsimile of the real world.

But of course it looked like garbage. It looked primed for failure from day one. It was too obviously unserious to register the uncanny valley anxieties AI-generated imagery does. Yes, the much-remarked upon lack of legs in the avatars, but also the laughable cartoonish aspects, the cursed attempted conjuring of a Facebook-but-in-real-life vibe, and so on.

Now, I’m not going to sit here and lament the passage of the Steve Jobsian ethos from the Valley, which elevated the importance of imbuing tech with taste, but I do think where we’ve landed since then is reflective of what’s motivating and incentivizing the industry in the AI era. In the 2000s, during web 2.0 and the rise of the smartphone, tech companies still very much needed to sell new users on its devices and digital platforms. It was pretty simple; Apple knew the iPhone had to look and feel good, or no one would want to learn how to use it. Aesthetics were core to the project, from the slick industrial design of the phone itself to the user interface design of the OS and the apps it would house.

Conversely, the apps that made the iPhone so successful—namely, social media—were predicated on an aesthetics of transparency. Facebook, Twitter, Instagram; these apps existed as conduits to share personal experiences with friends (and, yes to glamorize or dramatize them, to induce more sharing). But the central idea was user connection, that the software would mostly get out of the way, so people could share photos, thoughts, memes and work.

It fits with Cory Doctorow’s enshittification thesis that after Apple et al won, and entrenched the phone as the core device through which the majority of us process the world, socially, for work, etc, becoming enormous monopolies in the process, that concerns over the aesthetic dimensions of the project would diminish. The fading interest in serving the user, the consumer, the staidness of the monopoly; all that explains the curdling aesthetics of Silicon Valley design.

But AI (and the metaverse, and web 3) must be seen not as novel innovations but as new attempts by the same investor and developer class at extracting value from their past successes, at seeking out new revenue sources amid an already digitally saturated world. It was no longer enough for users to post photos taken with their iPhones on social media, or to use cloud enterprise software to organize and input work; AI companies want to generate those images themselves, perform the work themselves, and capture the value for themselves.

For years now, Silicon Valley has largely failed to produce something that most people want, or are even comfortable having in their lives; it has failed to make the case for AI to a public that mostly fears for their jobs, their energy bills, their children’s safety and future.

AI slop ensures that no one forgets this. No wonder OpenAI wants to pivot to focusing on enterprise AI, where no one has to look at the technology’s visual exports unless they are forced to by their boss. The general public dissatisfaction with AI is a result, in part, of being surrounded by so many obtrusive reminders of this grim outcome, and the attendant, even grimmer pursuit: That Silicon Valley will not stop until it has colonized, extracted, and automated all that it possibly can.

You might even go so far as to say that Sora was a crude, funhouse mirror-refracted vision of the world Silicon Valley is struggling to birth. Let’s not forget that most people who encountered it found it repulsive.

Further reading:

-What is “slop,” exactly? by Max Read

-Disney’s Sora Disaster Shows AI Will Not Revolutionize Hollywood by Jason Koebler at 404 Media.

-AI Slop Education by Audrey Watters

-The Slop Tax: A Brief Introduction by Mike Pepi

-AI: The New Aesthetics of Fascism by Gareth Watkins

Non-slop must-read of the week:

Kevin Baker on military automation, Claude, and Project Maven:

Earlier this week, I broke news about a new basic income pilot program; the first of its kind to issue payments specifically to workers who have lost work or opportunities because of AI.

The first basic income for workers impacted by AI has begun sending out $1,000 monthly payments

The first basic income program for workers who have lost pay, jobs, or opportunities to AI began sending out its first funds this week. The program is run by the nonprofits the AI Commons Project and What We Will, who together are administering the AI Dividend, which will issue a no-strings payment of $1,000 a month for a year to a cohort of 25-50 impac…

The reaction to that story was, let’s say, pretty strong! A lot of commenters and readers criticized the program as insufficient or abetting the AI industry. Honestly, I love it; I welcome any and all strong, thoughtful reactions to my work and goings-on in AI or Silicon Valley or wherever else in general. It’s always good to hear from you.

In fact, it made me think I might have to start running some letters to the editor here. Let’s start with one from Anne Wanders in Germany:

Hi Brian,

First off, thanks so much for all your work! It’s so necessary, sadly.

You already made the observation that narrative around a need for UBI is feeding into the narrative of the proclaimed future when “AI” or even “AGI” will make human work obsolete on a massive scale. So in addition to that, just a quick note regarding the concept of an “AI dividend”:

The name “AI dividend” is yet another example of a brilliant marketing term, makes it sound as if laid off workers were investors in the tech … I think it’s bitter to pay a “dividend” to people losing jobs rather than to the people worldwide whose IP and personal data have been illegally used, repackaged and sold. If anything, we (creators, authors, private individuals etc.) should be paid the dividend because our work and personal data are the foundation of their business model.

I, and many others I know, want acknowledgement of what these companies did and do, I want pay for what has been scraped without consent, I want them to respect the opt-in principle of copyright or intellectual property according to applicable different legal concepts globally. (I live in Germany, the EU does not have “fair use” or “copyright”, my intellectual property exists the moment I create it without a need to register it formally!). So once that happened, once they paid all of us what is due including interest/royalties, call it a dividend or not, if after paying out all that money they still manage to operate their business, then would be a good time for them to pay taxes so a democratically elected government can help people affected by job loss.

To me, such an “AI dividend” is a free handout, further evidence of a condescending view of the people unwillingly contributing to and affected by the AI hype. It’s an attempt to circumvent the democratic and legal system.

Again, thanks so much for all your work, it means a lot and it helps me spread the word about what AI is and is not.

Best wishes,

Anne

These are good points! The first thing I will say is that one thing that’s a real challenge in running this kind of a one-man newsletter operation is that there’s often no easy way to designate between ‘opinion’ and ‘news’ and ‘essay’ and it would even be kind of weird if I were to try do so. BITM is comprised of opinionated columns and essays (see above), more straightforward reporting, and so on. And sometimes those things are in tension; when I was reporting on DOGE’s firing of federal tech workers last year, I had a lot of opinions that didn’t necessarily seem appropriate to include in a news story. But then I might share those opinions in an edition just a few issues later. It’s a little messy.

So! Just because I am writing about a basic income project does not mean I am endorsing said project. I hope that’s clear in general, and from the presentation, tone, and inclusion of critical perspectives in the story, but it also makes a lot of sense that it would seem otherwise. After all, I condemn and endorse stuff all the time in these pages! I’ll surely write more on UBI in the future, but for now, suffice to say, I think this is a fascinating project that is very much a sign of the times, and in BITM’s wheelhouse. (And for the record, the UBI is being piloted by nonprofits that are not affiliated with AI companies, by worker-affiliated orgs who are trying to help blunt the impact of the AI economy.)

But thanks again—and always—for the comments, notes, emails, and social posts. All of it. I read them all, even if I don’t always have time to respond. I count myself lucky to be part of such an incredible critical community here. Cheers all, and hammers up.

Interesting point about the tech guys not even caring about aesthetics, obviously enshitiffication is a big part of it but are they so arrogant as to not realize that we hate them and their products and a minimal effort might help, or are they just not as smart as anyone thinks they are and making the products they make just isn't that good!

Pretty telling that SV didn't really release AI video generators as production tools for filmmakers and creators (whatever one thinks of the merits of the technology, its most practical *eventual* use would be as a production or post-production tool) but rather as yet another feature to plug into their addictive doomscrolling platforms. The idea is not to build better tools for creators and developers but to keep you hooked on the black mirror.